If you were a developer two or three years ago, you experimented with AI to see what it could do reliably. The current conundrum with AI is how to architect systems responsibly and at scale. Here’s what we’re seeing in the market and what it means for developers.

Agentic AI has left the lab

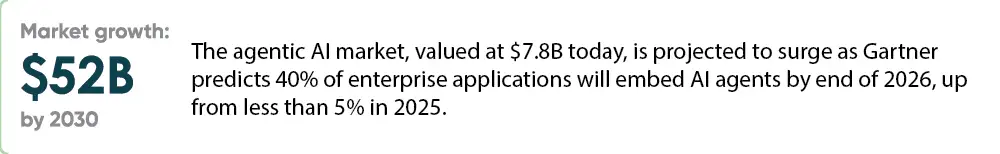

In 2026, reactive AI trends shifted to autonomous AI agents. Earlier systems operated on a prompt-response model. Agentic systems independently plan multi-step actions, use tools, call APIs, and iterate toward goals with minimal human supervision.

From a developer’s perspective, this means a fundamental architecture shift. The six agentic core design patterns are the vocabulary of modern agent systems. Engineers are now challenged to design reliable orchestration logic across agent boundaries rather than just writing good prompts.

Specialized multi-agent architectures have created significant impacts. Rather than one massive LLM doing everything, leading teams deploy conductors that coordinate specialist agents: one agent does research, another implements, a third validates. This mirrors effective human engineering teams.

Protocol standardization

Two open protocols have reshaped how AI agents connect to the world. Every developer building production AI systems needs to understand them.

- Model Context Protocol (MCP) has become the standard for connecting agents to external tools, databases, and APIs. As an open-standard protocol, the number of new connectors is rapidly evolving. What once required custom integration work is now largely plug-and-play.

- Agent-to-Agent Protocol (A2A) defines how agents from different vendors and platforms communicate. IBM’s Agent Communication Protocol (ACP) merged into A2A in August 2025, consolidating the standard.

These protocols make possible cross-platform agent collaboration that didn’t previously exist. Developers no longer need to build bespoke connectors for each data source or tool. The ecosystem is converging on composable, interoperable infrastructure, a shift as significant as REST standardizing web APIs in the 2000s.

AI coding tools reshape the developer role

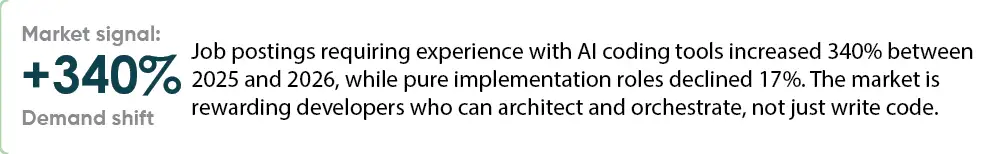

The tooling landscape has matured quickly. GitHub Copilot’s agent mode can handle complete issue-to-pull-request workflows autonomously. Cursor has earned a loyal following for its fluid multi-file editing and natural language codebase search. Context windows have expanded dramatically. Leading tools now operate with 200K-1 million tokens, letting AI consider entire microservices, API contracts, and database schemas simultaneously.

The developer experience is evolving accordingly. Senior engineers describe the shift as moving from “writing every line” to “conducting an orchestra of AI agents while focusing on parts requiring deep domain expertise.” Junior developers report dramatically faster skill development. A 3.2x faster onboarding metric reflects how AI assistance accelerates mastery of complex codebases.

“The developers thriving in 2026 are those who treat AI coding tools as a lever for architectural thinking, not a replacement for it.”

– Kibernum Engineering Team

RAG is now enterprise infrastructure

Retrieval-Augmented Generation (RAG) has crossed from experiment to baseline. Now the leading edge is Agentic RAG, which combines autonomous agents with retrieval systems. Agents decide what to search for, evaluate relevance dynamically, and iteratively refine their research. This is a meaningful architectural upgrade, but it comes with compounding risk. A poor retrieval decision early in an agentic chain can cascade through every subsequent step.

Vector databases, embedding models, and chunking strategies decided today will compound in value for years. Teams investing in robust RAG infrastructure now are building a durable competitive advantage.

For developers, the practical focus is on:

- Choosing the right vector store for your latency requirements

- Designing chunking strategies matched to document structure

- Implementing metadata filtering to reduce irrelevant retrieval

- Building evaluation pipelines to catch hallucinations before they reach production

Small models are the quiet revolution

Enterprises are discovering a counterintuitive truth: smaller, domain-specific models often outperform general-purpose giants for production workloads.

The logic is practical. You can’t send sensitive enterprise data to external APIs. You can’t run 175-billion-parameter models on edge devices. A model fine-tuned on your healthcare records or financial instruments understands your domain better than any general-purpose system and runs at a fraction of the cost and latency.

The emerging strategy is to create task-specific models that combine frontier models for complex reasoning and creative synthesis with small domain-specific models for everything that needs to be fast, private, or embedded. Architects for these hybrid systems are increasingly in demand.

Governance is now an engineering discipline

The EU AI Act’s first prohibitions took effect in February 2025. Governance has become an architectural requirement from the start of a project. Production AI systems now need audit trails, explainability layers, bias detection pipelines, and clear human-in-the-loop escalation paths. IP indemnification has become a standard enterprise requirement for AI coding tools, with most major vendors now offering some form of coverage and code provenance tracking. Engineers now need to consider:

- Building observability into agent chains from the start, logging every LLM call, every tool invocation, and retrieval decision

- Design human-in-the-loop checkpoints for high-stakes autonomous actions before production deployment

- Evaluate AI coding tools for IP indemnification and code reference features before enterprise rollout

- Implement data residency controls in RAG pipelines, especially for regulated industries

- Establish evaluation benchmarks for hallucination rates, treat them as engineering KPIs, not acceptance criteria

What this means for engineering teams

At Kibernum, our Elite Consulting Unit approaches GenAI projects from a search and retrieval perspective, because the key to any reliable AI system is accessing data securely and surfacing the right information with every query. Across our 1,500+ consultants, we’re seeing these trends play out in real client engagements: teams that invest in agentic architecture, robust RAG pipelines, and AI-fluent developers are outpacing those still in pilot mode.

The developers winning in 2026 aren’t chasing every new model release. They’re doing the unglamorous work: building reliable pipelines, establishing governance, measuring real outcomes, and iterating on what works. The foundation you build today, in tooling fluency, agentic architecture, and data infrastructure, is the competitive advantage you’ll compound for years.